I just wrote a very large blog post about kicking frontier LLM’s to the curb. The problem I’m facing is that running a useful scale LLM on my extremely modest PC is not just slow, it’s difficult. I don’t mind waiting 30 minutes or even an hour for it to work on a small piece of a bigger project, but to come back after an hour and realize it made things worse or stopped after 5 minutes means I have figure out how to kick start it.1

My PC isn’t fancy. It’s about 3 years old, has 32GB of RAM of which 16 GB is “shared VRAM”, meaning that it’s basically using half of it’s RAM as if it were VRAM. The result is a machine that’s decent for most work tasks2 but would have poor performance for games, video editing, big 3D model rendering / editing, and… LLM use. If I had unlimited time and patience, I could probably flog Qwen 3.5 9B with a 4-bit quantization into working well enough over a long enough timeline using my current PC.

I’ve looked into what it would cost to either build a stand-alone system or an entire secondary machine just for these kinds of tasks plus home LLM inference use. None of these options are particularly attractive at this time. Single board computers like the Raspberry Pi, Orange Pi, Jetson Nano and others would probably cost in the range of $500 and probably not crack 5 tokens per second. A GPU in an external enclosure would probably cost around $700 for 16GB and could possibly run up to 40 tokens per second. However, it would probably be kinda loud and take up desk space. A Mac Mini with 16 GB of unified memory could probably reach 10-15 tokens per second for $600 or so, which would be a lot slower than a full external GPU but also silent.

Honestly, none of these options are super attractive right now. I wouldn’t mind building a DIY rig with an SBC, but that’s a lot of money for not a lot of speed. I wouldn’t mind getting a Mac, but while it would likely be easier to set up than a Raspberry Pi and could run larger models, it wouldn’t work much faster than the Pi’s. The benefit of either a SBC or Mac Mini is I could set them up and put them in some unused corner of the house. Even if the GPU enclosure route is more power and speed for less money, it would need to be both loud and tied to my PC at all times.

None of these solutions are perfect, but pretty much all of them are some combination of expensive with a modest increase over current computing abilities.

Anyhow, I broke down and gave $10 to OpenRouter.ai.

This is not an endorsement – it’s just what I settled on using after poking at various other options. I’d looked into getting a plan through Alibaba’s Qwen, Kimi AI, Groq3 , Deepseek, and other LLM API aggregators like Togther.AI. OpenRouther.ai doesn’t charge for 50 daily API calls to a few of their “free” models, but if I carry a $10 credit balance I can have 1,000 calls per day and use more models. It was easy to kick the tires on their free plan, find it could work well enough for my purposes, and hand them $104 , and want to have access to 200x more API calls per day.

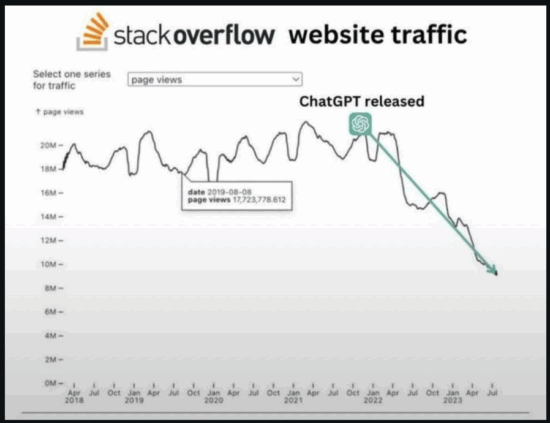

If I’m going to use an LLM and still determined to avoid OpenAI/ChatGPT, Anthropic/Claude, Elon/Grok, Google/Gemini, and their ilk, I have to turn to other models. I need something that’s better than modern baren StackOverflow but doesn’t need to be a giant evil LLM either. I’m having a fair bit of success with GPT-OSS 120B, MiniMax M2.5, and Qwen models.

I’m not doing anything groundbreaking. I’d restarted the virtual assistant project from scratch a few weeks ago and just working on getting the pieces operational. These skills aren’t anything wild – control over my PC’s media functions, modest automated regular downloading of files, communication over the Matrix protocol, etc. Even the wakeword, STT5 , and TTS6 systems aren’t very new. The only “new” thing I’m trying to do is tie these pieces together with a little bit of personality from an LLM.

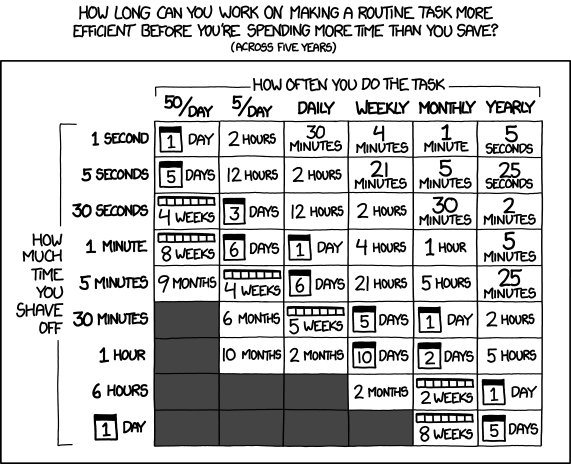

Even without groundbreaking innovations, it’s interesting to see the “cost” of this inference. Yesterday I used approximately 12 million tokens, largely with GPT OSS 120B. Right now Claude is about $1/M tokens for Haiku, $3/M tokens for Sonnet, and $5/M tokens for Opus. 78 It looks like the going rate for GPT OSS 120B is probably about $0.04/M tokens. Having now used Claude models last month and GPT OSS now, I can say Haiku is very useful, but their other models aren’t 3x and 5x more useful. But, more importantly, there is no way Haiku is 25x better or that Opus is 125 times better than GPT OSS 120B. I don’t doubt these models might cost that much more to develop and run, but I’m just not seeing a jump utility that justifies these costs. I’ll admit that Haiku could probably have done the job in half the tokens, but even so it feels like there’s an upper limit to how useful an LLM could be. Or, rather, an upper limit to how useful and LLM could be to me.

I just read an interesting blog post / article specifically about Anthropic’s recent publicity blitz / stunt regarding their “Mythic” model. They are supposedly not releasing the model to the public because it is so smart and dangerous. Suffice it to say, the author makes a convincing case Anthropic’s claims are smoke and mirrors. One particular section struck a chord with me:

[W]hat am I getting for $25 per million input tokens that I cannot get from the open-weights ecosystem for more than two orders of magnitude less — roughly 227× cheaper, at eleven cents per million?

What, indeed?

As much as I like to fiddle with little gadgets, make and tinker with things, and even like the odd new shiny toy, I’m not a fan of shoving email/push notifications/cloud/crypto/NFT/blockchain/wifi/mesh/AI into every damn thing. I don’t need push notifications from my toaster, don’t need to preheat my oven before I get home, don’t want to have an AI analyze the mustard collection in my fridge and offer recipes.

If an LLM like GPT-OSS 120B released in August of 2025 can handle meaningful coding tasks swiftly, what more do regular people really need of an LLM? I’m not sure regular people really do. I do think large corporations, data brokers, and governments are probably already licking their lips at the idea of being able to build better profiles for consumers.91011

Perhaps one day I’ll try to bolt on some features that require some novel problem solving – like the ability to research things on the internet, check emails, draft email replies / queries, maybe even do some light scheduling or administrative work.

Software Development with LLMs- Series Plugin Test for Illustrative Purposes Only

- ChatGPT WordPress Plugins

- Coding with an LLM Sidekick

- Python Practice with an LLM

- Not Team AI

- Never Stop Breaking Up

- Weakness

- What a funny phrase “kick start”. I wonder if people mostly think of the crowdfunding platform rather than it’s original usage? [↩]

- It does get bogged down in very large PDF’s and spreadsheets [↩]

- NOT Grok. Groq is, as best as I understand them, a chip company that builds devices that can run inference on medium sized LLMs very quickly [↩]

- Plus credit card processing fees [↩]

- Speech to text [↩]

- Text to speech [↩]

- These are the “input” $/M token prices. Claude’s “output” generation $/M token prices are 5x the input cost. I’m just trying to keep their pricing plan information simple/streamlined for ease of reading and reference [↩]

- For the curious, ChatGPT’s pricing is $0.20/M tokens for their 5.4 nano model, 5.4 mini is $0.75/M tokens, and their flagship 5.4 model is $2.50/M tokens. [↩]

- I was going to say “users”, but really, the regular people here aren’t the “users” – the companies and governments are. I may very well need to start calling people “usees”. [↩]

- Use-ees? [↩]

- It sounds good in my head, but doesn’t seem to track properly when typed [↩]