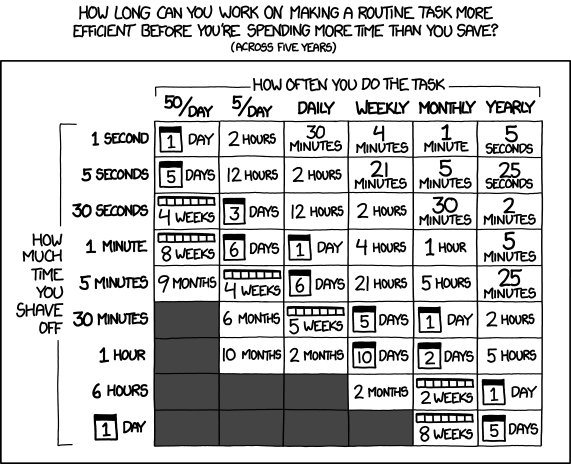

About two months ago1 I signed up for a frontier LLM / AI subscription. It was the lowest plan at Anthropic so I could use Claude Code. I have a small website business2 that had a lot of stuff broken for a while. Although I had paid a few hundred dollars to a few different developers and even tried to hire several more to help, I wasn’t able to get anyone to help out or write a single line of code. It’s not that fixing the various code problems within a WordPress plugin are beyond me3 but more that tracking down and fixing a bazillion little problems would have been extremely time consuming4 and I just didn’t have the time.

Okay, enough justifications – I signed up for Anthropic at $20/month and honestly, it was fantastic. I have built out two or three big projects, easily a dozen medium projects, and I have no idea how many minor items. I could go from idea to description to implement so much faster than I could have alone, it’s not even funny. I’m confident I will keep using several of the things I’ve built for a very long time. The $20/month plan has it’s limitations – you have a limited amount of amorphous compute you can use during 5 hour stretches as well as a limited amount you can use during a weekly period. During “non-peak” hours you have more amorphous compute. I know you get a ton more compute with the $200/month plan, and honestly it’s almost certainly worth it to a full time developer, but I have so many misgivings about funding companies whose value proposition involves boiling oceans of drinking water, slurping up energy, enabling surveillance states, and allowing computers to make decisions in wartime.

Anyhow, I cancelled my subscription today just before it was about to renew for the second time. I’ve given Anthropic $40 of my money and gotten well more than that in value, so I’m fairly content with that transaction. But, now that my bigger projects are done I don’t have a need for continued use and can make due with either free options or roll code by hand.

I was tempted. I’m still tempted. If I paid several hundred dollars to real humans and received nothing, I could absolutely find a way to spend $240/year to enable me to build more complicated things faster. Even without these justifications5 I can absolutely afford $20/month.6 But, much like an evil ring that grants you some modest powers, I’m pretty sure the hidden costs just aren’t worth it.

I wondered when I started using a paid LLM again7 how long I would keep paying for it. I probably got value out of ChatGPT for about two or three months and after that I mostly kept it out of convenience, inertia, and make stupid pictures.8 I stopped using it because I wasn’t getting steady value out of it and I didn’t like continuing to fund OpenAI. Would I keep the Claude subscription for months longer than I was really using it – out of the convenience of having a frontier LLM on tap?

It didn’t hurt that it felt like Claude was steadily getting less intelligent and helpful.9 If I were a more paranoid or cyclical person I would believe cell phone manufacturers make their phones slow down just as the new flagship phones are released and frontier LLM companies dumb their models down when the newest pricier models come out.

As frugal as I am, I’m willing to pay for a frontier model because they’re incredibly helpful in realizing . However, I don’t want to support most of the frontier companies10 , their evil alliances11 , or side quests to block other AI companies from developing, devour the earth’s energon cubes, and boil the oceans.

I mean, why can’t I just do this on a small scale at home? Part of the problem is that even trying to get my hands on a very small PC is becoming unnecessarily expensive. At the time I’m writing this, the Raspberry Pi 5 16GB12 is going for $305, closing in on triple the initial MSRP of $120. Adding a case, some cables, the AI HAT+ 2, a heat sink / cooler, and beefier power supply would probably bring the cost to $600. I could buy a whole extra brand new desktop PC for that price. Or just use my current desktop to run an LLM in the background.

Which is what I’m doing literally right now.

I’m running LM Studio on my modest PC13 to serve up small LLMs to VS Code and Cline, to go through some small Python codebases to help me with some projects. After quite a lot of trial and error, I’ve basically settled on Qwen 3.5 9B using a 4-bit quantization as the best model I can run on my machine that can actually help. It is punishingly slow… but it does work. Something that might have taken a frontier model 5-10 seconds to do takes my machine probably an hour. Some light web research suggests that a frontier model is probably operating around 50-100 tokens per second while my machine can manage a blazing 1-2 tokens per second.

Since I’m rambling here anyhow… I’m going to backtrack slightly, just so I can give a little context. Sometimes I’ll find myself stuck in a cognitive loop of frustration and rabbit holes and decision paralysis. Writing these things down lets me excise exorcise14 these thought-demons at the cost of inflicting them upon my legions of loyal readers. I find jotting things down in a semi organized fashion means I don’t have to keep all the little pieces of ideas swirling around in my brain. I can finally relax, knowing they’ve been realized… somewhere. This is why I’ll jot down some sketches, create some scraps of code, or tuck a note away in Standard Notes.1516 Well, Working with frontier models makes me hate their rate limits and everything they stand for, which makes me want to build my own. Where was I?

Right. I’ve been swirling around the vortex of working with a frontier LLM’s, getting sick of paying and/or supporting them, try some free API resources, bump into their free tier limits, fall down a rabbit hole investigating what it would cost to build a machine of my own, get disgusted at the cost and figure I’ll just run them on my current machine, get slightly frustrated at the time it takes to do anything meaningful, and wonder about maybe throwing a few dollars at a frontier LLM … just to get this project finished. But, I don’t need a frontier LLM right now and I don’t need to get things done fast … especially when I should be doing the work I perform in exchange for the money I use to pay my mortgage.

In some ways, having a very slow LLM at my disposal is actually helpful. Yes, it does mean I have to listen my little PC’s fan hum to itself for an hour to accomplish something kinda basic. But, then again… it’s busy working on something, freeing me up to do other things.

Like write blog posts.

Plus, there are some possibly realistic uses for this kind of super low cost basic research / experimentation. I’ve been using this cobbled together system of various LLM’s, frontier and local, plus my modest Python skills, to try and create a semi-useful virtual assistant. I’ve connected to a few very small LLM’s so it can act as a human-ish interface for useful scripts17 , connected it over the Matrix protocol so I can talk to it securely from a phone even when I’m not home, and now that I know which kinds of models would work for some simple Python code generation, I could have a useful slow coding helper wherever I need it. Frankly, the main use of the coding assistant for me right now is building deterministic scripts that help me on a daily basis. There are other directions I could imagine taking this project from here. By adding a Meshtastic node to my home set up and carrying a small Meshtastic device with me, I could still stay in touch with my very slow and low bandwidth PC wherever I was. With a solar panel or power supply, I could even run all this entirely off grid. Going completely off grid isn’t something I’m super into, I like having easy access to broadband and grocery stores, but it sure would be neat and a good excuse to buy a few small Meshtastic devices.

Of course, once I start spinning around the idea of a Meshtastic node, I’ll want to bundle it with a Raspberry Pi 5…

Software Development with LLMs- Series Plugin Test for Illustrative Purposes Only

- ChatGPT WordPress Plugins

- Coding with an LLM Sidekick

- Python Practice with an LLM

- Not Team AI

- Never Stop Breaking Up

- Weakness

- You know, before our latest war and revelations AI companies were helping power the county’s military. [↩]

- Very boring [↩]

- I’m kinda decent at plugin dev for someone with zero training [↩]

- Cue meme of Don Draper yelling “That’s what the money is for!” [↩]

- Forgive the humble brag [↩]

- Just look at all these streaming services I pay for. [↩]

- I paid for ChatGPT in 2023 and 2024 [↩]

- I made several “make it more” style pictures… [↩]

- I was going to find a link to support this … sense – but there were honestly too many links to too many articles I didn’t want to vet. Suffice it to say the “vibe” I got is that as of April 2026, I’m not the only one who feels like Claude got stupider. My impression of the consensus is that Claude got too many users, resource usage went up, and quality went down. [↩]

- OpenAI, Anthropic, Grok/Twitter/Elon, Google/Evil, or even MicroSoft [↩]

- billionaires, oligarchs, fascists, surveillance states, Bezos, Musk, or certain president-grifters [↩]

- If you can find one! [↩]

- Bought long before RAM-pocalypse [↩]

- Sheesh. [↩]

- I used to use plain text files, then Google Keep, but you know what – this is service is great and it’s not Google or evil [↩]

- As far as I know [↩]

- Downloading files automatically, setting reminders, etc [↩]